Sharing our knowledge one article at the time

Our blog is meant to share our team's most recent knowledge and findings in development, programming, new technology, productivity tips and much more.

One goal in mind: empower our clients, their peers and your teams.

Categories

How AI Is Revolutionizing Software Development: Trends and Applications

Explore the crucial role of conscious leadership in digital transformations, delving into its philosophy and concrete applications.

Conscious Leadership in Digital Transformation

Explore the crucial role of conscious leadership in digital transformations, delving into its philosophy and concrete applications.

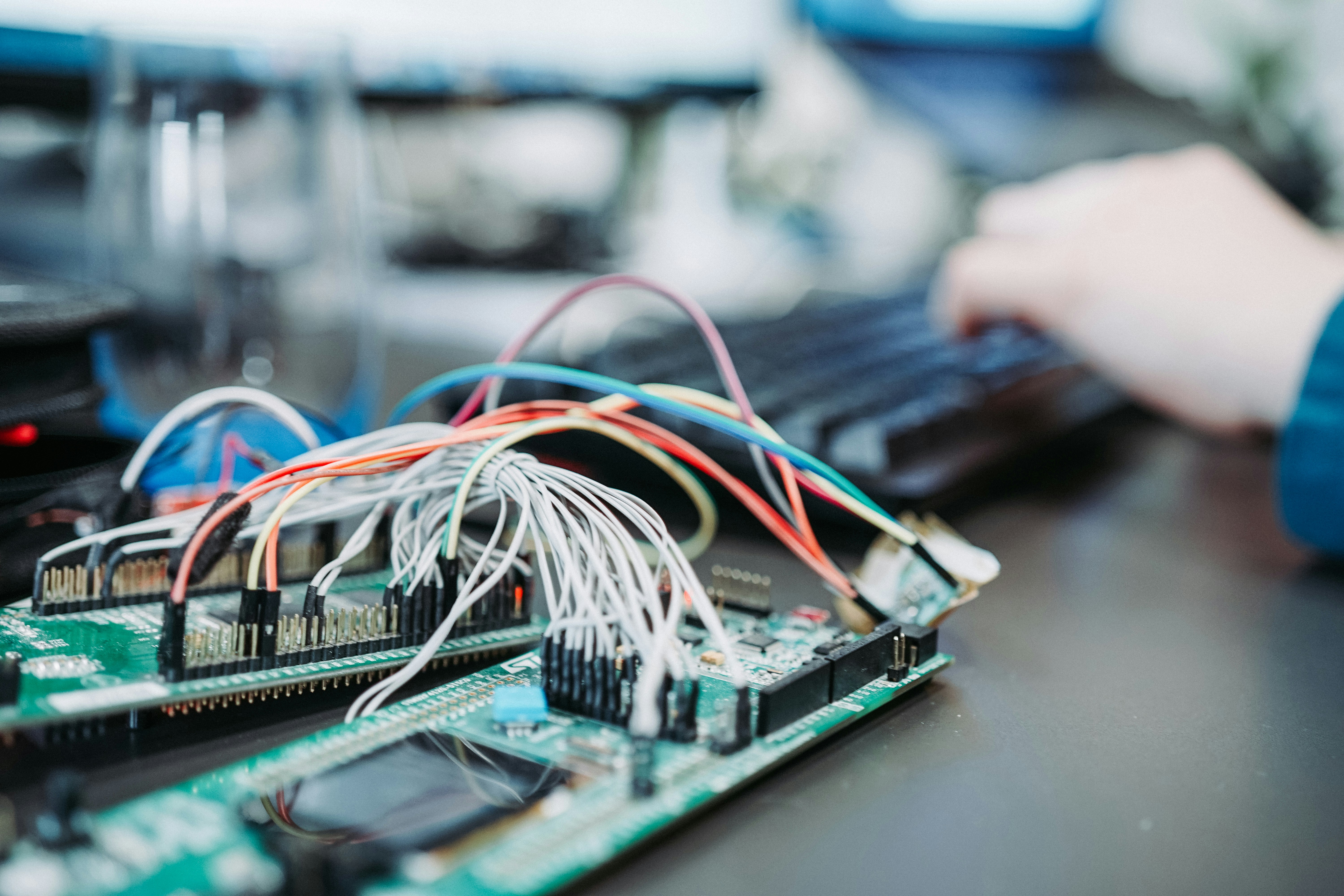

The Internet of Things (IoT) and Digital Transformation: Connecting the Physical and Digital Worlds

Explore how IoT revolutionizes industries by enabling data-driven decision-making and automation. Delve into the challenges and opportunities of connecting physical and digital worlds.

Leadership in Digital Transformation, a Critical Role!

With the relentless digital transformations of our times, leadership is emerging as the essential catalyst for change and organizational success. Let’s dive into the different aspects of leadership in this context to discover how, through its strategic vision, its culture of innovation and its investment in digital talent, it is the essential driver of successful...

Custom Software Development in the Age of Cloud Computing

It’s a safe bet that you’ve already addressed cloud computing in your daily operations. However, do you know how the use of these online services interacts with custom software development? Let’s delve deeper into different aspects of this type of development in the cloud context, what it allows, its advantages, the challenges to keep in...

Segmention For Sustainability

Need to add functionality to an old coding language? Why not think outside the box! Of course, it’s always fun to start a new project. To choose the architecture and technologies with the current and future needs of the client top of mind is always rewarding for us! However, not all of these projects start...

Three tips for dealing with the IT labour shortage

If you’re reading this page, it’s probably because you too have been affected by the new labour shortage “pandemic” that began a few years ago and has been impacting large companies’ information technology recruitment efforts as they attempt to find qualified workers. The labour shortage has hit the IT sector hard This new pandemic has...

Did you know that your technology may be outdated?

Are you overwhelmed with internal requests? Is your IT support team overwhelmed? Are you still able to deliver to your customers? Are your employees frustrated? Very often, these situations are related to tools that are no longer adequate or to aging technology that no longer meets the changing needs of the company. Our team at Done advises...

Web development: Why Blazor is the ideal framework?

Blazor. In early November 2018, when it was still an experimental product, I was talking about Blazor at an event we had organized at our office. By the way, I had surprised many colleagues when I revealed at the end that my PowerPoint presentation had been prepared with Blazor. I followed the evolution of this...

OCTAS finalists 2021: Solotech

We are incredibly thrilled to announce that our esteemed client, Solotech, has emerged as a finalist for an OCTAS award in the esteemed category of “Business Solution – Private Enterprises of all sizes” with their groundbreaking “Service +” solution. The OCTAS Awards stand as a testament to excellence in IT and digital innovation, recognizing outstanding...

Custom software: the importance of having a sound control tool

Depending on your business needs, creating custom software can prove to be an expensive project. To prevent this from turning into a financial sink hole, one of the tips we discussed in our previous article is to equip yourself with monitoring tools. Having the right tools at your disposal means putting more chances on your...

Five tips to avoid software development cost overruns

Companies are turning more and more to technology to meet specific needs such as eliminating recurring tasks or increasing productivity and efficiency. While there is a plethora of software available on the market for payroll management, communication within different business units, and marketing automation, companies are increasingly looking to develop customized software solutions to meet specific...

Bracket Show

We present to you our Bracket Show project, its origin, its content and our wishes for the future.

Visit Our YouTube PageNewsletter

Stay tuned. Sign up to our newsletter to learn more about our company’s updates, team’s knowledge and much more.

Directly in your inbox once every quarter.